With more data, more sources and more tools comes more mess. In October 2025 the OSINT world is grappling not just with gathering information, but verifying it. Misinformation, manipulated media and “fake” open-source claims are on the rise. That means verification and methodology matter now more than ever.

Recent academic work has started to probe what we might call the “business of OSINT attention”, how open-source actors, volunteer channels and independent analysts race to publish, sometimes sacrificing quality for speed. In that context, OSINT is no longer just about finding data, it’s about confirming it, tracing provenance, building evidentiary chains. October’s landscape is reminding us: fast ≠ accurate.

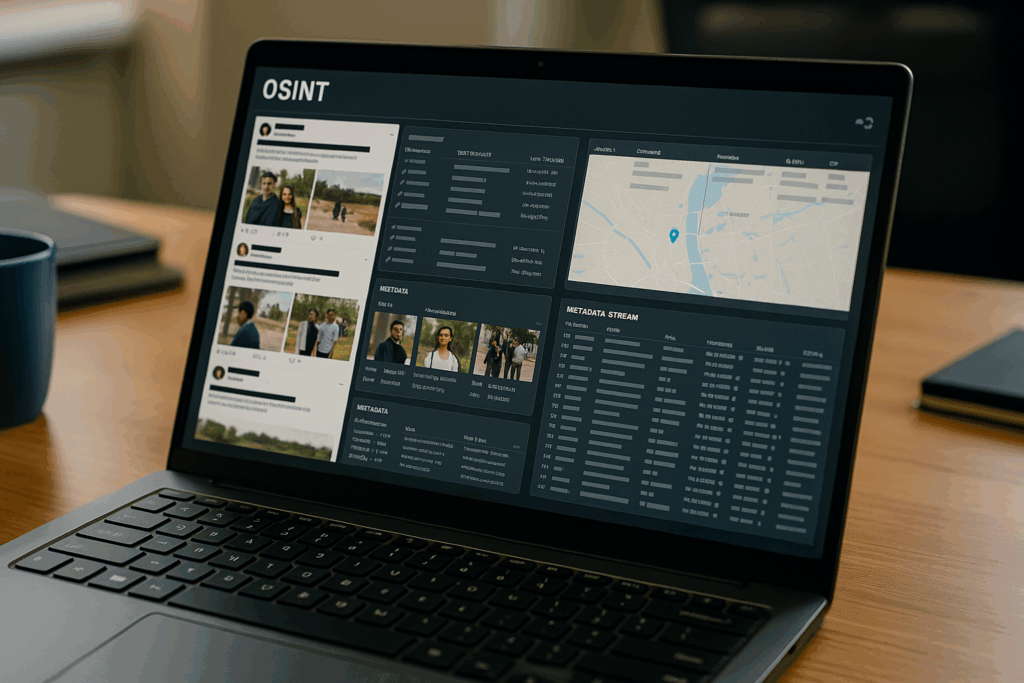

One thing, analysts are using more advanced tools: AI-based consistency checks, timestamp verification, metadata forensics, cross-platform comparison. For another: organisations are becoming much more aware of the risks of “false positives” from open-source feeds. An OSINT insight that turns out to be wrong can be worse than no insight at all.

You’ll notice more demands for transparency in OSINT workflows: who collected the data, how was it processed, what tools were used? That’s because OSINT is increasingly used in serious contexts, corporate investigations, regulatory scrutiny, even judicial environments. Verification is no longer a luxury; it’s part of the baseline expectation.

So if you’re working in OSINT or using OSINT reports, here’s a challenge: how confident are you in your verification process? What kind of audit trail exists for your data? October 2025 is not just about gathering open-source info, it’s about doing it right. Because the stakes are higher, the noise is louder, and bad intel can backfire.

References: